Stop Hunting for Stock: How to Generate Custom B-Roll Directly in Premiere Pro

Lewis Shatel

5 min read

18 nov 2025

Stop Hunting for Stock: How to Generate Custom B-Roll Directly in Premiere Pro

The 'Stock Footage Tax': Why Searching for B-Roll Is an Expensive Time-Sink

You know the drill. You're deep in a corporate edit, the timeline is humming along, and then you hit a 4-second gap where the client forgot to film the product on a table. Simple, right? Just grab some B-roll. So you tab out of Premiere, open Storyblocks, type "product close-up minimalist" and start scrubbing. Forty-five minutes later, you've got seventeen browser tabs open, a growing sense of existential dread, and a clip that's almost right but the color temperature is slightly off and there's a logo on the mug in the background that legal is going to flag.

That time is billable. That time is yours. And it's being eaten alive by the stock footage hunt.

The Frustration of 'Almost Right' Clips and the Subscription Bloat of Envato/Storyblocks

The fundamental problem with stock libraries isn't that the footage is bad. Some of it is genuinely well-shot. The problem is that it was shot for someone else's project. You're always compromising. The lighting is close. The motion is close. The vibe is close. But "close" in a tight edit is the difference between a piece that feels cohesive and one that feels assembled from spare parts.

Then there's the cost reality nobody talks about enough. You're probably sitting on at least two or three of these subscriptions right now. Storyblocks at $30/month. Envato Elements at $16.50/month. Maybe a Getty or Pond5 credit pack on top of that for the one shot you couldn't find anywhere else. Add it up. You're spending $50–$80 a month on the privilege of scrubbing through footage that doesn't quite fit.

And the download-move-import dance? Don't get me started. Download to your Downloads folder, move it to your project's asset directory, import it into Premiere, drop it into a bin, rename it so future-you knows what it is. For a four-second clip. That workflow is broken, and we've all just accepted it because there was no alternative.

Until now there is.

The GenAI Hub: Bringing the World's Best Video Models Into Your Timeline

What's changed in the last eighteen months is that video generation models have gotten genuinely good. Not "good for AI" good. Actually good. Kling 2.0 can produce smooth, cinematic motion. Runway Gen-4 handles texture and lighting with a level of control that was unthinkable two years ago. Google's Veo 3 is producing clips that will make you do a double-take. These aren't novelty tools anymore. They're production-grade assets waiting to be pointed at your specific problem.

The issue has been integration. Every one of these models lives in its own web app, its own account, its own billing system. So instead of solving the tab-switching problem, you've just traded Storyblocks tabs for Runway tabs and Kling tabs. You've added subscriptions instead of replacing them.

Why a Pay-As-You-Go Credit System Beats Five Different AI Subscriptions

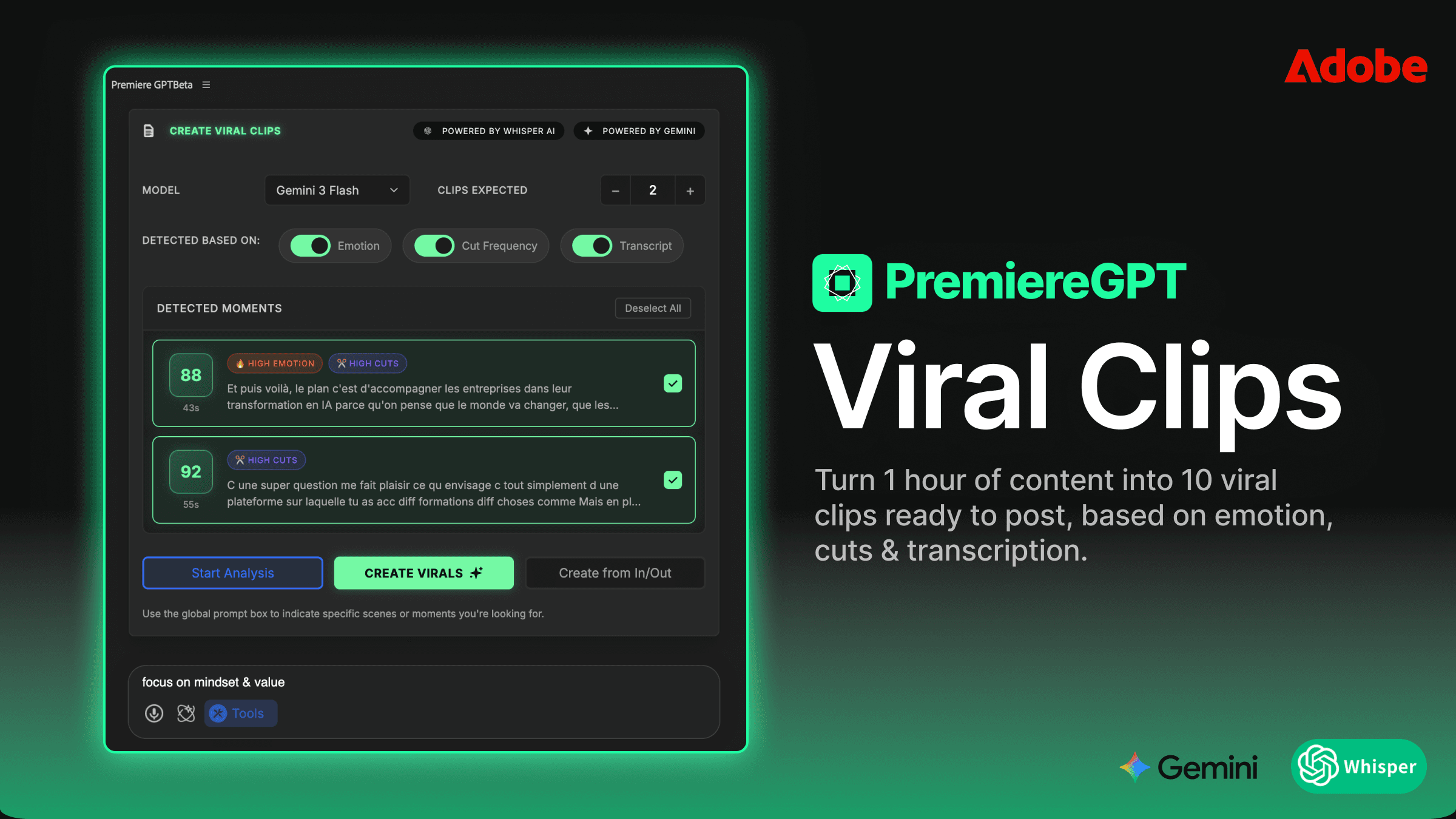

This is where the economics get interesting. Tools like PremiereGPT are building what's essentially a unified GenAI hub that lives inside a Premiere Pro panel. One integration, multiple models, one credit pool. You're not paying $30/month to Runway whether you use it or not. You're spending credits when you actually generate something.

Think about your actual usage pattern. On a busy week, you might need AI-generated B-roll on three or four projects. On a slow week, maybe zero. A flat subscription charges you the same either way. A credit-based system means your cost scales with your actual workload. For most editors, that's a significant saving — we're talking potentially cutting your AI tooling costs by 60–70% compared to maintaining separate subscriptions across Runway, Kling, and an image generation tool simultaneously.

More importantly, having all three models accessible from a single panel inside Premiere means you're choosing the right tool for the job rather than the tool you happen to be subscribed to this month. That's a fundamentally different creative workflow.

Workflow: From Prompt to Timeline in 60 Seconds

Let's be concrete about what this actually looks like in practice, because the workflow shift is the whole point.

You're in your sequence. You've got a 3.5-second gap where the interview subject is talking about their morning routine and you have zero coverage footage. Old workflow: stop, tab out, hunt, compromise, download, move, import, drop. New workflow: open the PremiereGPT panel, type your prompt, hit generate, and the asset lands directly in your project bin — ready to drag to the timeline.

No tab-switching. No Downloads folder archaeology. No re-importing. The asset is already inside Premiere.

How to Use the PremiereGPT Panel to Generate Assets That Match Your Sequence Settings

The panel is context-aware in ways that matter to working editors. When you're generating a video clip, you're not just throwing a text prompt into a void and hoping the output matches your 4K 23.976fps timeline. The tool respects your sequence settings. Frame rate, resolution, aspect ratio — these feed into the generation parameters so you're not getting a 1080p clip when your sequence is set to 4K, or a 30fps clip that's going to stutter in a 24fps edit.

The generation workflow breaks down like this:

Video generation: Write a descriptive prompt — lighting conditions, camera motion, subject, mood. Select your preferred model (more on model selection below). Generate. The clip lands in your designated bin.

Image generation: Same process for stills. Useful for lower thirds backgrounds, texture overlays, title cards, or freeze-frame replacements when your source footage has a bad frame you can't cut around.

Voice generation: Need a quick VO line to fill a gap while you're waiting on client approval? Generate it directly in the panel. It drops into your audio tracks like any other asset.

The bin management piece is underrated. Because assets generate directly into Premiere, you can set up a dedicated "AI Generated" bin in your project and route everything there. Your project stays organized. You know exactly what's AI-generated versus client-provided footage. That matters when you're doing a final asset audit before delivery.

The 60-second claim isn't marketing fluff. For a simple B-roll prompt on a fast model like Kling, you're genuinely looking at prompt-to-bin in under a minute. Veo 3 takes a bit longer due to generation complexity, but even then you're talking two to three minutes versus the 45-minute stock hunt. The math is not close.

Pro Tip: Using AI to Fill Those 'Awkward Gaps' in Your Edit Where the Client Forgot to Film Coverage

Every editor has a project folder with a subfolder mentally labeled "the coverage they didn't shoot." The client filmed the interview. They filmed the product. They did not film anyone actually using the product, which is the one shot that would make the whole thing work. You've asked. The shoot is wrapped. The location is unavailable. You're on your own.

This is the use case where AI B-roll goes from "interesting experiment" to "genuinely saved the project." Because you're not trying to match a specific person or a specific location — you just need a person using a product in a context that makes narrative sense. That's exactly what these models are built for.

Some specific scenarios where this workflow has proven out:

Abstract concept coverage: Client is talking about "digital transformation" or "scalability" and you need something that isn't a tired stock shot of people pointing at whiteboards. Generate something abstract, techy, and specific to their brand color palette.

Location establishing shots: You need a city skyline but the client only shot indoors. Generate a dusk aerial that matches the warm grade you've already applied to the rest of the piece.

Texture and atmosphere overlays: Bokeh light leaks, particle overlays, environmental textures — generate these as image assets and composite them over your existing footage to add depth without a separate stock subscription.

Cutaway close-ups: Hands on a keyboard, coffee being poured, a phone screen lighting up. These are the workhorses of corporate B-roll and they generate cleanly and quickly on any of the major models.

The key mental shift is this: stop thinking of AI generation as a last resort when stock fails. Start treating it as your first move for any coverage gap. The specificity you get from a well-written prompt will almost always beat the "close enough" you get from scrubbing a library.

Quality Check: Which Model Should You Use for What? (Veo vs. Kling vs. Runway)

Having three models available in one panel is only useful if you know which one to reach for. They're not interchangeable. Each has a distinct performance profile, and using the wrong one for a given shot type is how you end up with weird artifacts and wasted credits.

Here's the working editor's breakdown:

Veo 3 (Google) is your cinematic heavy hitter. It handles complex scenes with multiple elements, realistic lighting physics, and nuanced motion better than anything else currently available. Use it for hero shots — establishing scenes, dramatic environmental footage, anything where visual quality is the primary concern and you have a minute or two to wait on generation. It's also notably strong on prompt adherence, meaning what you describe is what you get, with less randomness than the other models. The tradeoff is generation time and credit cost per clip.

Kling 2.0 is your workhorse. Fast generation, consistent quality, excellent at smooth camera motion and clean subject movement. For the bread-and-butter B-roll shots — close-ups, simple environments, product footage — Kling hits the quality bar you need at a speed that keeps your edit moving. If you're filling multiple gaps in a single session, Kling is probably where you'll spend most of your credits. It's also notably good at maintaining motion consistency across a clip, which matters when you're cutting between AI-generated shots.

Runway Gen-4 is your texture and style specialist. Where Runway earns its place is in stylized content — anything where you want a specific aesthetic treatment, painterly looks, high-contrast stylized environments, or abstract visuals. It also handles image-to-video prompting well, which is useful if you've generated a still in the panel and want to animate it. For straightforward realistic B-roll, Kling or Veo will usually outperform it, but for branded stylized content, Runway's aesthetic control is unmatched.

A practical decision tree: Need it fast and realistic? Kling. Need it cinematic and complex? Veo. Need a specific style or aesthetic treatment? Runway. That framework will cover 90% of your generation decisions without overthinking it.

The goal isn't to use AI for everything. The goal is to eliminate the specific, painful, time-wasting workflow of hunting for stock footage that almost fits. These tools solve that exact problem, inside the application you're already in, without blowing up your monthly subscription budget.

If you want to get up to speed fast on writing prompts that actually produce usable B-roll — not the vague, washed-out outputs you get from lazy prompting — we put together a practical reference guide specifically for editors working inside PremiereGPT.

The GenAI Prompt Cheat Sheet for Editors is a free PDF with 50+ proven prompts organized by shot type: cinematic establishing shots, product close-ups, abstract concept coverage, texture overlays, and more. Every prompt has been tested against Veo, Kling, and Runway so you know which model to pair it with. It's the reference doc you'll keep open next to your timeline.

Download it, drop it in your project templates folder, and the next time you hit a coverage gap at hour nine of an edit, you'll have exactly what you need to fill it in under a minute.